Attention In Transformers

https://www.youtube.com/watch?v=eMlx5fFNoYchttps://www.youtube.com/watch?v=OFS90-FX6pg&t=253s

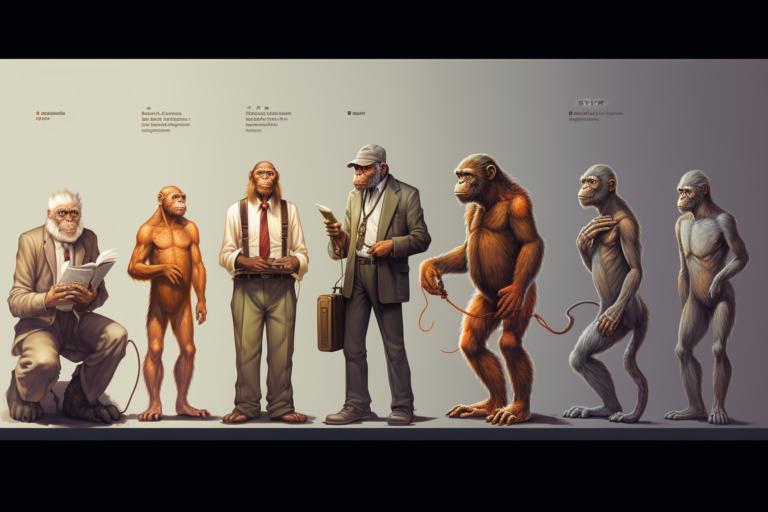

Darwinism is a term often used to describe the theory of biological evolution developed by the naturalist Charles Darwin. Darwin’s theory suggests that all species of organisms arise and develop through the natural selection of small, inherited variations that increase the individual’s ability to compete, survive, and reproduce. Darwin defined evolution as “descent with modification,”…

Here’s your quick list of the low hanging fruit you can use to put your margins on overdrive: If you use this list whenever you find winning items, you will be more profitable than the average Amazon seller