Similar Posts

Data Analysis vs. Data Science

ByvomarkThe distinction between “data analysis” and “data science” revolves around the scope and depth of the activities involved in each field. Here’s a breakdown of how these terms differ: Data Analysis: Data Science: Overlap and Practical Use: Thus, while data analysis is a critical activity within data science, it does not encapsulate the full spectrum…

The Basics of NLP

ByvomarkNatural Language Processing (NLP) is a field at the intersection of computer science, artificial intelligence, and linguistics. It’s concerned with the interactions between computers and human (natural) languages. Here are some of the basic concepts and components of NLP: 2. Part-of-Speech Tagging: 3. Named Entity Recognition (NER): 4. Syntax Analysis: 5. Semantic Analysis: 6. Sentiment…

ATS Resumes

ByvomarkA.I. Power Prompts

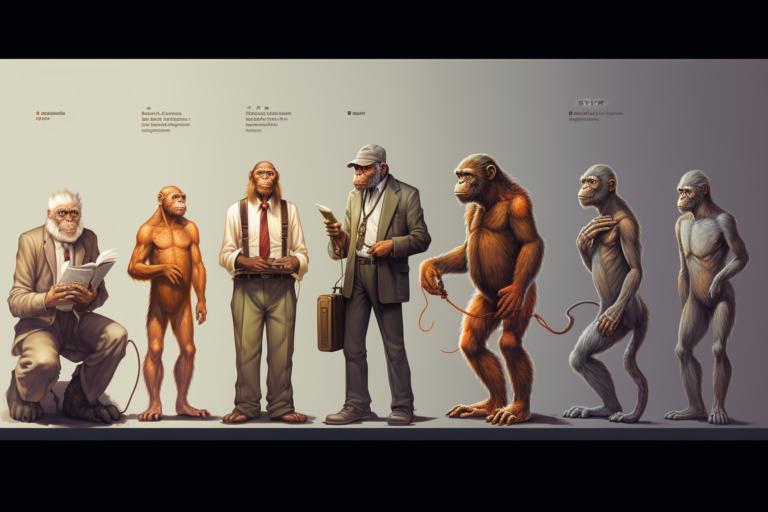

Darwinism

ByvomarkDarwinism is a term often used to describe the theory of biological evolution developed by the naturalist Charles Darwin. Darwin’s theory suggests that all species of organisms arise and develop through the natural selection of small, inherited variations that increase the individual’s ability to compete, survive, and reproduce. Darwin defined evolution as “descent with modification,”…